In order to rebuild particles using data from the Large Hadron Collider, a group of researchers from CERN, the Massachusetts Institute of Technology, and Staffordshire University have developed and executed a ground-breaking algorithm.

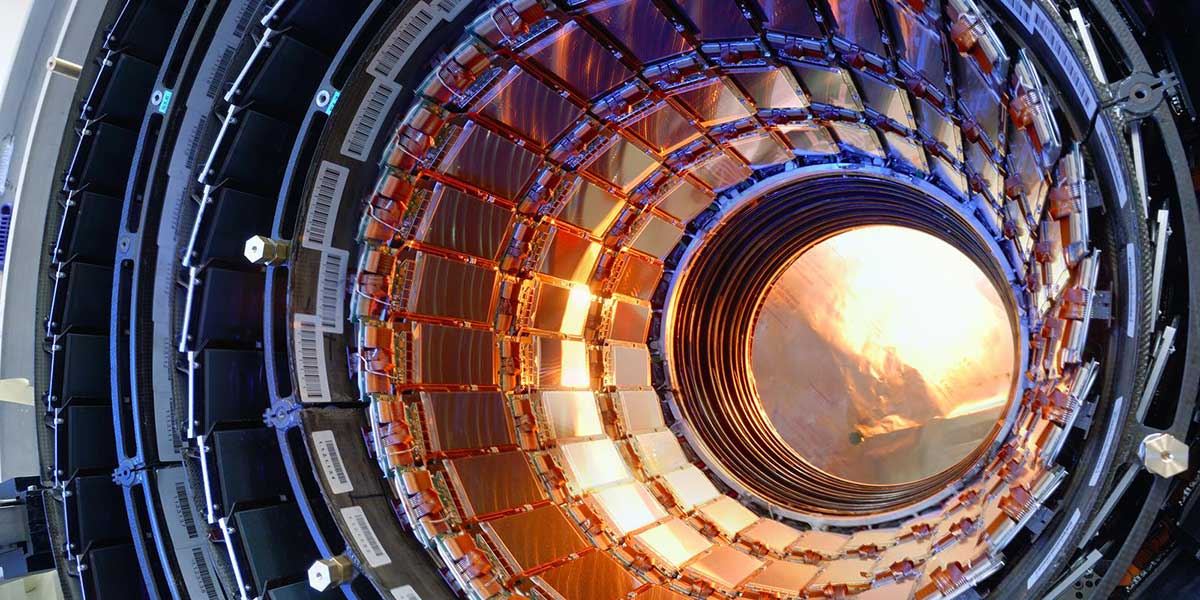

The Large Hadron Collider (LHC) is the most powerful particle accelerator that has ever been created. It is located in a tunnel that is one hundred meters underground at CERN, which is the European Organization for Nuclear Research. CERN is located near Geneva in Switzerland. It is the location of long-running experiments that provide physicists from all over the world with the opportunity to gain deeper insight into the character of the universe.

This work is being done as a component of the Compact Muon Solenoid (CMS) experiment, which is one of seven experiments now running at the accelerator and uses detectors to investigate the particles that are created when particles collide.

The project, which has been completed in preparation for the high luminosity upgrade of the Large Hadron Collider, is the topic of a brand new academic paper titled End-to-end multiple-particle reconstruction in high occupancy imaging calorimeters with graph neural networks. This paper was recently published in European Physical Journal C. The goal of the High Luminosity Large Hadron Collider (HL-LHC) project is to improve the performance of the Large Hadron Collider (LHC) in order to boost the number of discoveries that could be made after the year 2029. The High-Luminosity Large Hadron Collider (HL-LHC) will double the current number of proton-proton interactions from 40 to 200 in each event.

The study was directed and overseen by Professor Raheel Nawaz, who is currently serving as Pro Vice-Chancellor for Digital Transformation at Staffordshire University. In his explanation, he said, “Limiting the increase of computing resource consumption at large pileups is a necessary step for the success of the HL-LHC physics programme, and we are advocating the use of modern machine learning techniques to perform particle reconstruction as a possible solution to this problem.” He went on to say that this is one of the reasons why they are recommending the use of modern machine learning techniques to perform particle reconstruction.

He continued by saying, “Working on this project has been both a pleasure and an honor, and it has the potential to determine the future path of research on particle reconstruction by employing a more advanced AI-based solution.”

Dr. Jan Kieseler, who works in the Experimental Physics Department at CERN, provided the following additional information: “This is the first single-shot reconstruction of approximately 1000 particles from and in an environment that is more difficult than anything else that has ever been seen, as each proton-proton collision involves 200 simultaneous interactions. It is a significant step forward for future particle reconstruction to demonstrate that this innovative approach, which combines dedicated graph neural network layers (GravNet) and training methods (Object Condensation), can be applied to such challenging tasks while maintaining compliance with resource constraints.”